Our servers are getting ready to be awaken by the masses. As such, we needed to ensure our servers would not simply fall over if we should get a sudden influx of traffic when we open our doors to everyone.

When we first released our software, we quickly found that we needed to adjust some Apache and PostgreSQL settings in addition to installing a real PG connection pooler in the form of PgBouncer from the wonderful people at Skype. This is on top of our moderately heavy memcached usage that is already working at the application level. The specifics of this phase of development and the changes made to account for our load are for the next post. You can subscribe to my feed if you’d like to know when that is published.

For now, let’s get to the benchmarks.

Due to our previous changes, things were running smoothly. The various instances of the application were running well on their hardware. However, we hadn’t opened the doors for our service yet. We knew that we would need our servers to be able to handle much more load than they were currently handling. So, we set up some nearly identical to production servers and went to town with Apache Bench.

We’re currently running the following versions of applicable software for these tests:

For the test, I used ApacheBench, Version 2.0.40-dev. Data was collected by running and entering the information into OpenOffice’s Calc. The graphs were also made with this.

The versions of the two caches that were used are Squid Web Proxy Cache 2.6.STABLE5 and Alternative PHP Cache, which is to be included in PHP 6.

The tests were run on a server that sits in the same data center as the other server to minimize latency issues. Each test was performed 3 times to try to get more accurate measurements. Every test was accompanied by the proper headers to ensure mod_deflate was being used. The number of tests (specified by the -n option) was 500 for any concurrency less than 100. For any concurrency greater than 100, 1000 tests were performed. The peak load numbers were gathered from watching top during each test.

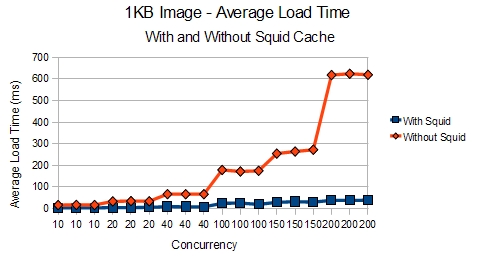

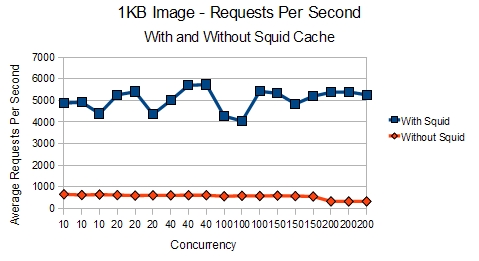

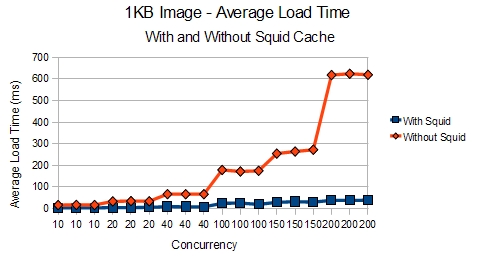

First off, let’s look at the results of a 1KB image with and without Squid Cache:

With this smaller file, the results show that Apache is clearly able to deliver roughly 700% more requests per second with Squid

We can also see how Squid allows Apache to handle even 200 concurrent threads requesting the image without breaking a sweat.

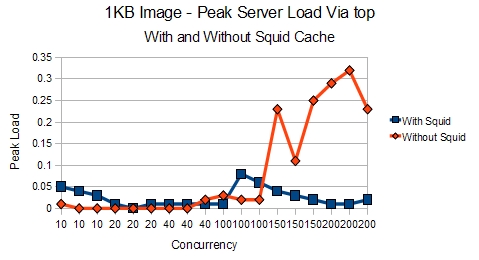

In addition, the server was relaxing, not having to create a bunch of Apache processes, when you look at the load compared to with and without Squid.

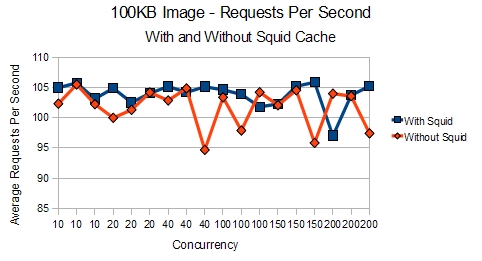

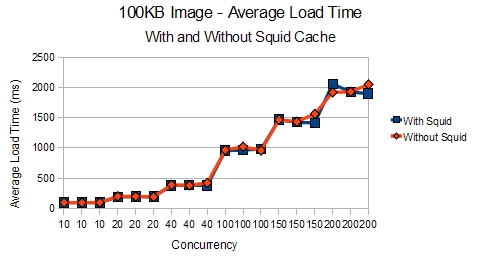

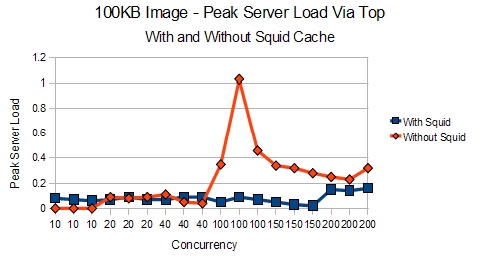

The same test was performed for an 100KB image to see if the results would be the same.

With the larger file, we can see that the differences are less apparent.

Even the load times look almost the same when you are using a 100KB image instead of a 1KB image.

However, the kicker is when you look at the load that the server is placed under. With Squid, the web server doesn’t have to work as hard since it doesn’t have to create all those Apache processes to answer for the image.

So, one can clearly see that for smaller objects and files, Squid can have a remarkable difference in what you can push out of your server. The results are much clearer when using a testing platform that allows for one to download embedded content. However, that would be suitable for a post some other time. For now, we’ll move on to the results of our other caching solution.

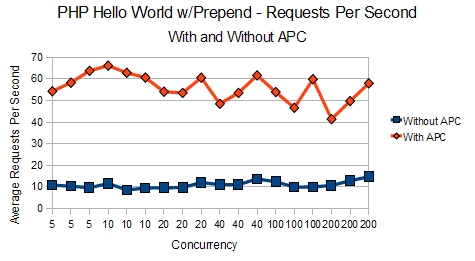

Seeing how Squid provided such excellent results, it was definitely time to look for a caching solution for PHP. APC was the natural choice considering it’s inclusion in the new version of PHP. After exploring it, we installed it and were astonished again by the results. Our server, which was keeling over under 10-20 requests per second was now able to deliver 50-60 requests per second, a 400% increase in performance! But don’t believe me, I’ll let the charts speak for themselves.

These test were performed on a simple Hello World PHP application:

<?php

echo 'Hello World';

?> |

<?php

echo 'Hello World';

?>

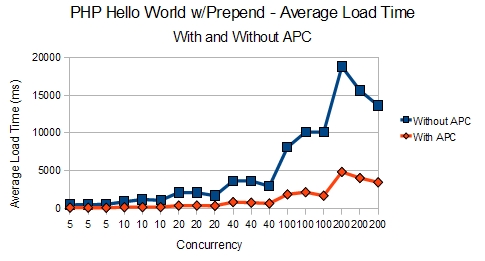

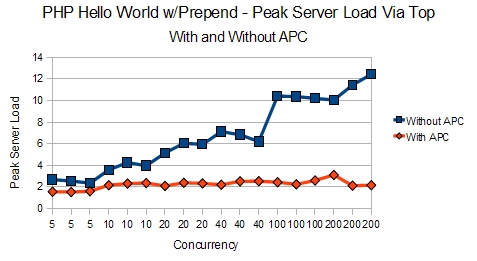

Our prepend configuration means both memcached and PostgreSQL are invoked when our simple Hello World test was run. This was ideal so we had a baseline when more testing is performed on our actual application code. Here are the results of those tests:

It’s easy to see how APC allows for many more requests per second to be served.

The load times offer an even better look at how APC allows the server to remain calm even under high load. Obviously 15-20 second load times was not acceptable. However, APC allowed the load times to remain under 5 seconds even with 200 concurrent threads slamming on the server.

Again, some of the best information is seen in the peak loads. The server is, quite obviously, doing much less work thanks to APC. This is especially evident in the 100-200 concurrency levels when the server was showing loads of 10-12.

So, if you haven’t thought about installing Squid Cache or a PHP cache, like APC yet, hopefully these benchmarks will be enough to convince you of their merit. Considering the ease with which these two caches can be setup and installed, you’re doing yourself and your hardware a disservice without them.

Well, that wraps up this post. Stay tuned for my next post for hints on tuning Apache configuration settings, PostgreSQL settings, and the trials and tribulations of moving an application from a simple site to a completely automated SaaS.

If you liked this post, then please consider subscribing to my feed.

Pingback: Andrés Felipe Vargas (andphe) 's status on Tuesday, 28-Jul-09 02:39:51 UTC - Identi.ca()

Pingback: Benchmark Results Show 400%-700% Increase In Server Capabilities with APC and Squid Cache | I Love Bonnie.net()

Pingback: OpenQuality.ru | Июльская лента: лучшее за месяц()

Pingback: Interesting Finds: 2009 07.27 ~ 08.03 - gOODiDEA.NET()

Pingback: | Blogging()

Pingback: wholesale snapbacks()